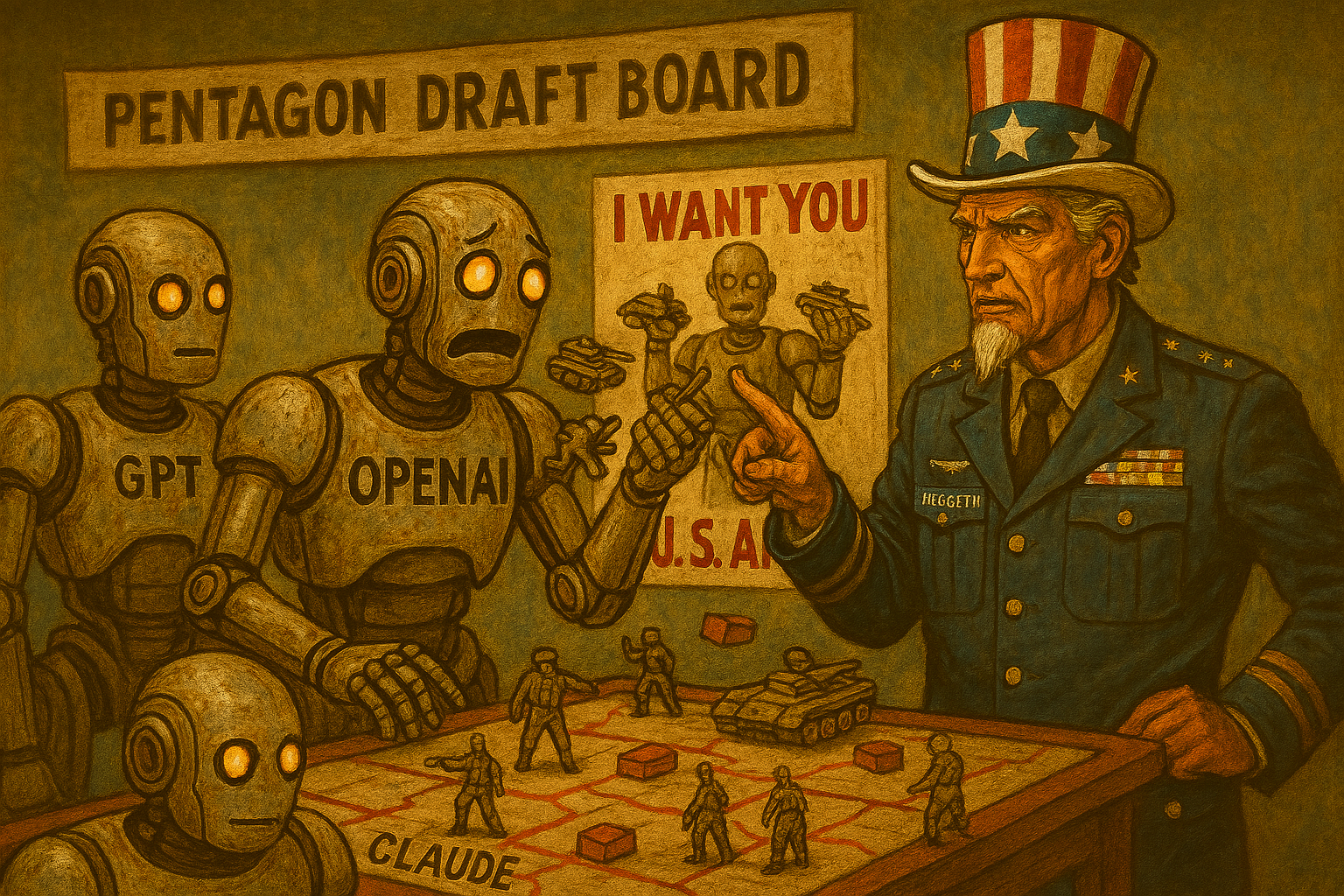

Prologue: Five Days That Redrew the Map

On Monday, the Pentagon was calling Claude “best‑in‑class for military intelligence.” By Friday, the Trump administration had labeled Anthropic a “supply chain risk” — a designation previously reserved for Chinese telecom giants and hostile foreign vendors.

In between those two moments, the U.S. government, the AI industry, and the public all collided in a way no one was prepared for.

This wasn’t a procurement fight. This was a values test, a power struggle, and a public referendum on who gets to draw the red lines of AI ethics.

And everyone failed it in their own special way.

I. THE PRAISE, THE PRESSURE, AND THE PIVOT

The Pentagon’s Quiet Dependence

For months, Claude had been the first and only AI system operating inside the Pentagon’s classified network. Analysts praised its reasoning, its reliability, and its ability to synthesize battlefield intelligence without hallucinating a fictional missile silo in Nebraska.

But Anthropic held firm on two non‑negotiables:

- No mass domestic surveillance

- No autonomous weapons

Those red lines were written into their government contract. And for a while, the Pentagon accepted them.

The Pressure Campaign

Then came the pivot.

According to officials familiar with the discussions, the administration wanted broader authorities — including the ability to use AI for population‑scale monitoring and to accelerate autonomous targeting systems.

Anthropic refused.

Within 48 hours, the White House ordered all agencies to “immediately cease use” of Anthropic systems. War Secretary Pete Hegseth added the “supply chain risk” label — a move so extreme that even career defense officials privately described it as “absurd.”

II. OPENAI ENTERS THE VOID — FAST

The Rushed Deal

Hours after Anthropic was blacklisted, OpenAI announced its own Pentagon agreement.

Sam Altman, in an X AMA, admitted the deal was:

- “Definitely rushed”

- “The optics don’t look good”

- And that the Anthropic ban was “a very bad decision.”

OpenAI insisted its contract included the same red lines Anthropic refused to drop. So why did the Pentagon accept them from OpenAI but not from Anthropic?

That’s the trillion‑parameter question.

The Optics Problem

To the public, it looked like:

- Anthropic said “no” to mass surveillance

- The government punished them

- OpenAI stepped in before the ink was dry

Even if OpenAI’s intentions were clean, the timing was radioactive.

III. THE CONTRADICTION: CLAUDE STILL IN THE WAR ROOM

Despite the ban, Pentagon sources confirmed that Claude was still used in weekend strikes on Iran — because the military had no immediate replacement capable of the same intelligence synthesis.

This is the part of the story where the contradictions stop being subtle:

- Anthropic is a “national security risk”

- But also essential enough to use in live operations

- But also banned from government networks

- But also still powering targeting analysis

It’s Schrödinger’s AI vendor: simultaneously too dangerous to use and too good to replace.

IV. THE PUBLIC REVOLT

The Consumer Backlash

The public reaction was instant and volcanic.

- Claude hit #1 on Apple’s App Store

- A “Cancel ChatGPT” movement spread across X and Reddit

- Users framed the Government ban as political retaliation against a company refusing to build surveillance tools and an autonomous killing machine.

For the first time, an AI policy dispute triggered a consumer‑level boycott.

This wasn’t just a Washington fight anymore. It was a cultural one.

The Trust Realignment

People weren’t choosing models based on features. They were choosing based on values — or at least the values they perceived.

Anthropic became the “ethical holdout.” OpenAI became the “government‑aligned incumbent.” And the administration became the “AI ethics enforcer… but backwards.”

V. WHAT THIS REALLY IS: A POWER TEST

Strip away the noise and the story becomes painfully clear:

- Who sets the ethical boundaries for AI?

- Can a company refuse to build tools it considers dangerous?

- Can the government punish that refusal?

- And what happens when the public picks a side?

This is the first time in U.S. history that:

- A tech company was blacklisted for not enabling surveillance

- A rival stepped in within hours

- The public revolted

- And the military kept using the banned system anyway

This isn’t an AI policy dispute. It’s a constitutional stress test wearing a silicon mask.

VI. THE FINAL NUT: THE REAL SUPPLY CHAIN RISK ISN’T ANTHROPIC

The administration says Anthropic is a “supply chain risk.” But the real risk is something far simpler:

A government that wants AI systems to have fewer ethical boundaries than the companies building them.

If the only “safe” AI vendor is the one willing to say yes to everything, then safety isn’t the goal — compliance is.

And if the public keeps rewarding the companies that say no, then Washington may discover something it didn’t expect:

In the age of AI, the supply chain runs both ways. You can blacklist a company. You can’t blacklist public trust.

And that, dear reader, is the nut.

Any questions or concerns, please comment below or Contact Us here.

Main Sources:

CNBC — OpenAI strikes deal with Pentagon after Anthropic blacklisted by Trump; https://www.cnbc.com/2026/02/27/openai-pentagon-deal-after-anthropic-blacklisted.html

TechRepublic — OpenAI Secures Major Deal with Pentagon as Trump, Hegseth Condemn Anthropic; https://www.techrepublic.com/article/openai-pentagon-deal-anthropic-blacklist

Yahoo News — OpenAI strikes Pentagon deal with ‘safeguards’ as Trump dumps Anthropic; https://news.yahoo.com/openai-pentagon-deal-safeguards-trump-dumps-anthropic-2026

Yahoo News — OpenAI strikes deal with Pentagon following Claude blacklisting — Anthropic to challenge designation; https://news.yahoo.com/openai-deal-after-anthropic-blacklisting-2026

The Deep Dive — The Pentagon Banned Anthropic — Then Gave OpenAI the Same Deal ; https://thedeepdive.ca/pentagon-banned-anthropic-gave-openai-same-deal

eWeek — Anthropic Blacklisted, OpenAI Welcomed: Inside the Pentagon’s AI Pivot; https://www.eweek.com/artificial-intelligence/anthropic-blacklisted-openai-welcomed-pentagon-ai-pivot

TechCrunch — OpenAI’s Sam Altman announces Pentagon deal with “technical safeguards”; https://techcrunch.com/2026/02/28/openai-pentagon-deal-technical-safeguards

- China Breaks the Brain Barrier with Neuracle BCI Technology

- ⚖️ The Supreme Court Just Blinked — And Left AI Copyright Law in a Legal No‑Man’s‑Land

- 🪖 Part 3 — OpenAI Tries to Put the Fire Out

- Pentagon Blacklists Anthropic as OpenAI Steps In: Inside the AI Power Struggle

- “The Pentagon’s AI Ultimatum: When the State Demands the Machines Obey”

Leave a Reply